Artificial intelligence has moved rapidly from academic research into industrial practice, but its adoption in nuclear engineering remains under cautious review. This reticence is not ideological but rather reflects the reality that nuclear systems operate under extreme regulatory, safety, and liability constraints. Under these conditions any analytical tool must be demonstrably reliable, auditable, and explainable. As a result, while the nuclear sector is increasingly interested in the opportunities that AI can present any such approaches must be able to quantify uncertainty, expose decision logic, and integrate explicitly with physical models and engineering judgement.

A UK-based technology company spun out of the University of Exeter, digiLab, has positioned itself squarely within this space. Its work focuses on uncertainty quantification, probabilistic machine learning, and explainable AI methods intended for safety-critical engineering environments such as nuclear power. According to the company, the goal is not to replace engineering expertise but to augment it with mathematically rigorous tools that allow engineers to understand not just what a model predicts, but how confident that prediction is and why.

“We specialise in trustworthy, explainable AI,” Amanda Niedfeldt, Head of Business Development at digiLab explains. “That means methods that are auditable, traceable, and explainable, which is essential for regulatory and safety-critical sectors such as nuclear, energy infrastructure, and environmental monitoring.”

From academic research to applied engineering

Emerging from research emphasising methods that combine physical understanding, data, and probabilistic reasoning, digiLab’s academic lineage continues to shape the company’s approach.

“Thelab is a high-tech research organisation,” Niedfeldt notes, adding: “Our technology is built on that research base, similar in depth to what you might see at organisations like DeepMind, but focused on practical applications rather than purely theoretical advances.”

At the centre of digiLab’s offering is what it refers to as its Uncertainty Engine, a platform designed to build, deploy, and manage probabilistic machine-learning models. The platform supports both code-based workflows for advanced users and graphical workflows intended to make advanced methods accessible to engineers without deep software backgrounds.

While much public attention has focused on large language models, digiLab argues that these represent only a narrow subset of artificial intelligence and are often ill-suited to nuclear applications. “When people hear AI, they immediately think of ChatGPT and large language models,” Niedfeldt says. “Those are useful for some things, but they are a small sliver of what artificial intelligence actually is.”

Instead, digiLab’s work centres on probabilistic machine learning rooted in Bayesian statistics, an approach whose theoretical foundations date back several centuries but whose practical application has only recently become feasible at scale.

Probabilistic models and uncertainty quantification

Uncertainty quantification (UQ) lies at the core of digiLab’s platform. In contrast to deterministic machine-learning models that return a single answer, probabilistic models produce distributions of possible outcomes. This distinction is critical in engineering contexts where understanding confidence and risk is as important as the nominal result.

“Many machine-learning methods will give you an answer without telling you how they got there,” Niedfeldt explains. “With probabilistic methods, you get a distribution of potential results, which tells you how confident the model is based on the data and information available.”

The company’s research and applied work makes extensive use of Gaussian processes, a class of probabilistic models well suited to engineering problems with sparse data. A Gaussian process provides a mean prediction and an associated uncertainty that varies with the inputs. This allows engineers to identify regions where the model is well supported by data but also regions where predictions are extrapolative and therefore less reliable.

The practical implication is a shift in how model outputs are used. “Instead of the computer telling you ‘A, B, or C,’ you might see that there is an 80% probability of B and a 20% probability of C,” Niedfeldt says. “That allows an engineer to decide whether more testing is required or whether the level of confidence is sufficient for the decision at hand.”

This probabilistic framing aligns closely with existing engineering practice, where margins, safety factors, and confidence intervals are already widely used. However, Niedfeldt emphasises that digiLab tools are intended to support, not supplant, human expertise, saying “In the sectors we work in, machine learning is a tool to inform decision-making, not replace it.”

Embedding physical knowledge

A recurring criticism of some AI approaches is that they lack a basis of real-world physical understanding. By embedding prior knowledge, constraints, and physical relationships into its models, digiLab explicitly addresses this issue, as Niedfeldt explains: “In a Bayesian way of thinking, you start with a prior. A scientist or engineer already knows something about the world. When they study materials, for example, they have expectations about physical properties and those constraints and parameters can be built into the model.”

This approach contrasts with purely data-driven methods that attempt to infer relationships without reference to underlying physics. While such methods can perform well in data-rich environments, they are poorly suited to nuclear applications, where data may be limited, expensive to obtain, or entirely unavailable for new designs.

“The majority of models don’t inherently understand physics,” Niedfeldt observes. “That’s why we focus on physics-based, engineering, and scientific applications, where expert knowledge is essential.”

Sparse data and early-stage nuclear systems

One of the most persistent challenges in nuclear engineering, particularly in emerging areas such as fusion and advanced reactors, is the lack of operational data. In some cases, no full-scale system has ever been built. Niedfeldt argues that this is precisely where uncertainty quantification offers the greatest value: “One advantage of our approach is that you can get started with sparse and limited data. If you only have three or four material samples, or you are just beginning to build a simulation, you can still use these methods to understand what you know and, importantly, what you don’t know.”

These methods are designed to operate across the entire facility lifecycle, from early-stage design and optimisation through to operation and eventual decommissioning. In the design phase, probabilistic models can be built around computationally expensive simulations. These models approximate the behaviour of the full model while explicitly tracking uncertainty. “If I build a simulation of a reactor subsystem, I know it is wrong to some degree,” Niedfeldt explains, “the question is how wrong it is and where that matters.” She continues: “That allows you to decide where to invest your limited budget. You might find that uncertainty in one model has little impact on the overall outcome, while uncertainty in another is critical. That’s where additional testing or simulation can be focused.”

This approach contrasts with traditional conservative design strategies, which often apply large safety margins uniformly. While conservative margins remain essential, digiLab argues that a more informed allocation of effort can reduce unnecessary conservatism without compromising safety.

“If scientists are allowed to work indefinitely, they will keep refining until they are extremely confident,” Niedfeldt notes. “But if we waited till we have perfect simulations we won’t have working reactors in the next 30 years. Engineering has always been about making informed decisions under uncertainty.”

The nuclear sector’s embedded safety culture places a premium on traceability and justification. Any analytical tool used to support design or operation must be justifiable to the relevant regulatory authorities. This requirement has historically limited the adoption of opaque algorithms. “The linchpin is trust,” Niedfeldt says. “It’s not just ‘what is the answer?’ but ‘how did you get that answer, and can I rely on it?’”

Recent high-profile failures of poorly governed AI systems in other sectors have reinforced these concerns. While such incidents may have limited consequences elsewhere, in nuclear engineering the stakes are far higher. “In nuclear, you’re signing off on decisions you’re liable for,” Niedfeldt observes. “You need to be confident in the output.”

By producing explicit uncertainty estimates and maintaining clear links between data, model assumptions, and results, probabilistic approaches offer a pathway toward regulatory acceptance. They align more naturally with existing safety case structures, which already rely on quantified margins and confidence arguments.

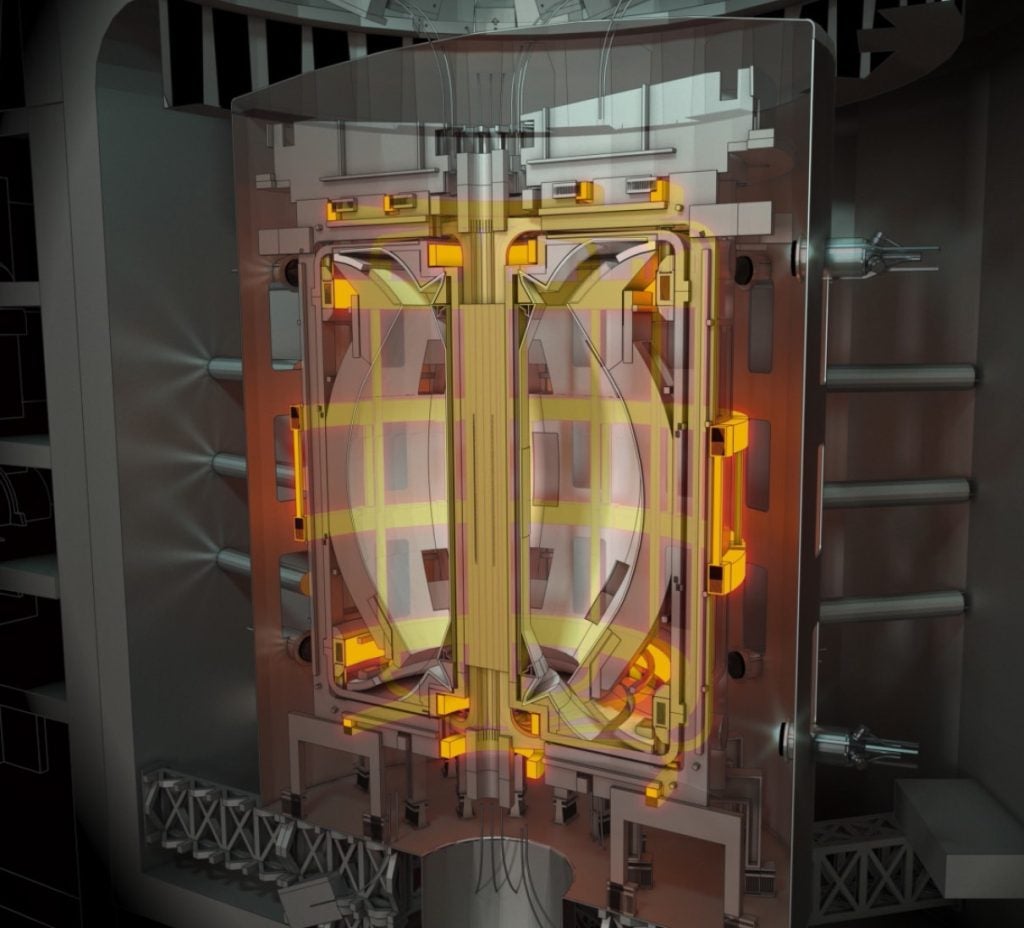

Fusion as an AI testbed

The company’s most extensive nuclear engagement to date has been with the UK Atomic Energy Authority (UKAEA) and with the newly formed organisation to deliver the UK’s first fusion plant: UK Industrial Fusion Solutions (UKIFS). The collaboration began around four years ago, with digiLab initially acting as an uncertainty quantification partner. “We were brought in specifically for uncertainty quantification,” Niedfeldt explains, adding: “That role has grown alongside UKAEA’s Compute Division, as they look to adopt machine learning in a controlled and scalable way.”

Rather than isolated pilot studies, UKAEA is deploying digiLab’s platform on-premises to enable its wider use among its engineers and scientists. This reflects a shift from experimental application to institutional capability building.

One major programme has focused on plasma physics simulations, which are computationally intensive and can take days or weeks to run. In this case digiLab tools are used to determine where additional simulations will provide the most information. “Instead of running hundreds of simulations, they can run tens and reach the same level of understanding,” Niedfeldt says. “That saves a significant amount of computational time and resource.”

Another application involves diagnostic and sensor placement for the STEP fusion reactor programme. In extreme environments where sensors are difficult and expensive to replace, placement decisions made during design have long-term consequences. As Niedfeldt observes: “Once they’re installed, it is difficult, expensive or sometimes impossible to move, replace, or repair them.” She explains how the digiLab tool helps: “We look at where to place sensors to get the maximum information from the machine during operation”.

Fusion has emerged as an early adopter of advanced digital and AI-driven methods, in part because it lacks the legacy systems and regulatory constraints found in the nuclear fission sector. Nonetheless, Niedfeldt sees this as an opportunity for cross-sector learning: “Fusion has to adopt these technologies. They’re dealing with problems humans can’t easily hold in their heads, and many things are still not well understood.” She continues: “There’s a real opportunity for intentional transfer of technology from fusion into fission. What’s learned about diagnostics, uncertainty, and model integration can feed back into more established nuclear applications.”

The same methodologies are thus applicable beyond fusion, including in fission reactors, SMRs, and other complex industrial systems. Looking ahead, Niedfeldt anticipates that probabilistic machine learning will become a standard part of engineering education and practice. “In the future, every engineer and research scientist will use these methods,” she predicts, comparing their adoption to that of tools such as MATLAB in previous decades.

However, scaling adoption does present challenges. For example, many engineers currently in industry were not trained in machine learning or probabilistic methods, and wholesale retraining is unrealistic.

“We don’t think everyone needs to become a coder,” Niedfeldt says. “The challenge is making these technologies accessible through workflows that fit existing engineering practice.” This philosophy underpins digiLab’s platform design, which supports both scripting-based and visual, drag-and-drop interfaces. The objective is to lower barriers to entry while maintaining methodological rigour.

Building trust

While nuclear remains a core focus, digiLab is expanding into other sectors with similar characteristics, including aerospace, national infrastructure, and environmental monitoring. But despite this breadth, digiLab emphasises that its mission remains centred on safety, reliability, and responsible use of AI.

“There are many ways AI can be misused,” Niedfeldt says. “But in engineering and scientific domains, it has enormous potential to help us understand systems better, reduce risk, and make safer decisions.” By grounding machine learning in probabilistic reasoning, physical understanding, and explicit uncertainty, digiLab is attempting to bridge the gap between emerging digital capability and the nuclear industry’s long-standing demand for trust.